What are Neural Networks ?

Inspired by the biological neural networks, this computing system “learns” to perform various tasks by taking into consideration certain examples, usually without being programmed with rules which are task-specific.

Neural networks are a functional unit of deep learning and are inspired by the structure of the human brain. However, the more recent Artificial neural networks are functional unit of deep learning.

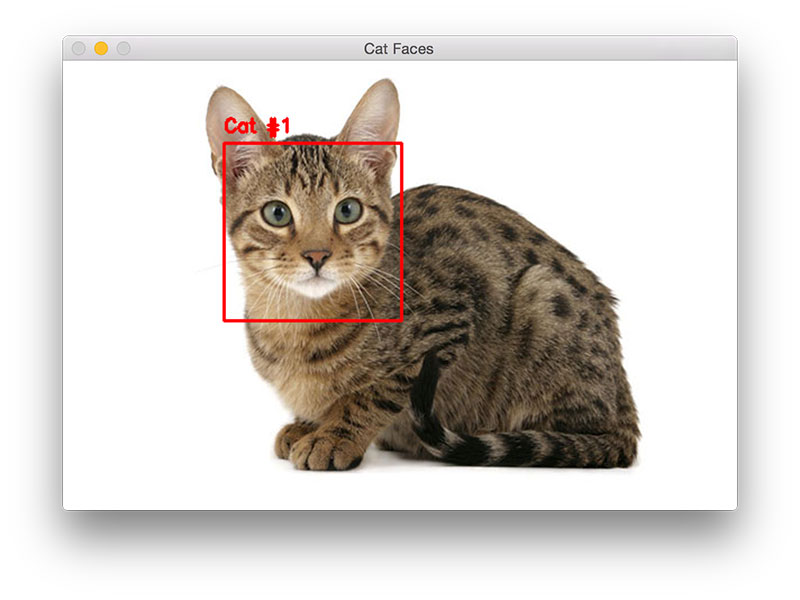

For example, in image recognition, such as identification of a cat image.

The computing systems might learn to identify images that contain cats by analyzing example images that have been manually labeled as "cat" or "no cat". By using the results to identify cats in other images, they are able to learn to identify the actual image. They do this without any prior knowledge of cats or their features, for example, that they have fur, tails, whiskers and cat-like faces.

Deep learning uses artificial neural networks which mimics the behavior of the human brain to solve complex problem data driven problems. Deep learning is itself a part of machine learning which falls under the large umbrella of artificial intelligence(AI).

Machine learning - An application of artificial intelligence (AI), Machine Learning provides the systems ability to automatically learn and improve from experience without being explicitly programmed. Machine learning focuses on the development of computer programs that can access data and use it to learn for themselves.

The process of learning begins when they start observing the data, such as examples, direct experience, or instruction, in order to look for patterns which help them make better decisions in the future. The primary aim is to allow the computers to learn automatically without human intervention or assistance and adjust their actions accordingly.

Deep learning – Deep learning (also known as deep structured learning or hierarchical learning) is part of a broader family of machine learning methods based on artificial neural networks. Under Deep Learning, the process of learning can be supervised, semi-supervised or unsupervised. Deep learning is a class of machine learning algorithms that uses multiple layers to progressively extract higher level features from the raw input.

For example, in image processing, lower layers may identify edges, while higher layers may identify the concepts relevant to a human such as digits or letters or faces.

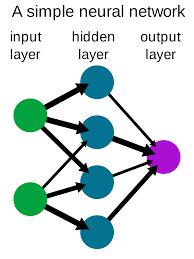

Structure of Neural network

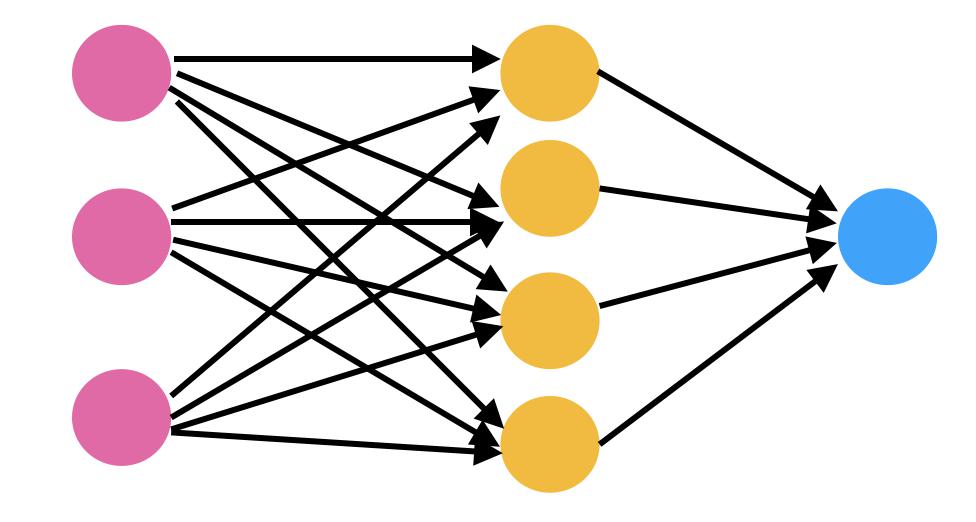

Neural networks are made of neuron, which is the core processing unit of the network. Neural networks are basically made of 3 different layers namely input layer, hidden layer and the output layer.

Input layer is the layer where the input is fed to the network. Output layer uses the output of that particular Hidden layer, where most of the computation takes place. This is associated with some numeric value called bias, which is then added to the input sum which is passed to threshold function called activation function.

Activation function determines whether a particular neuron should be activated or not. Activated neuron transmits data to the next layer.

A Channel connects between these neurons.

Working of Neural networks with example

Basic steps:

- Our input neurons represent an input, based on the information we are trying to classify.

- Each number in the input neurons is given a weight at each synapse(channel).

- At each neuron in the next layer, we add the outputs of all synapses coming to that neuron along with a bias and apply an activation function (commonly a sigmoid function) to the weighted sum (this makes the number something between 0 and 1).

Input sum= (w1*x1) + (w1*x2) + (w1*x3) + b1 calculated for each of neuron input - The output of that function will be treated as the input for the next synapse layer

- Continue until you reach the output.

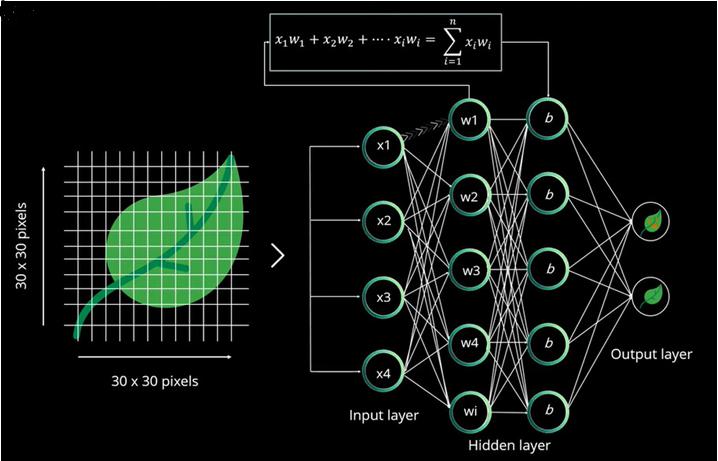

Consider example of classifying leaf such as normal leaf or defected leaf.

Here we are providing a leaf image to our system to classify based on its condition.

Image is divided into chunks based on its dimension such as, if it is 28 pixels in height and weight it is split as 28X28 px of 784px and fed to the input layer. But in this case it’s 30px of width and 900px height, which is fed to input layer.

Neuron of one layer is connected to another layer with a random weight which is used to calculate the sum. The input sum of one layer is sent to the next layer (hidden layer) and each hidden layer is associated with a numerical value which is added to the input sum.

Activation function decides which neuron will be activated and whichever neuron is activated that neuron value is passed to the next layer. This is known as forward propagation.

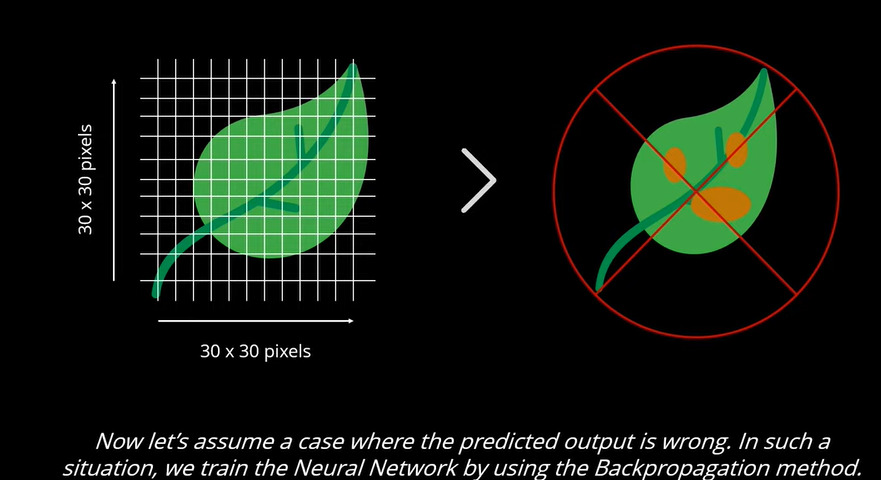

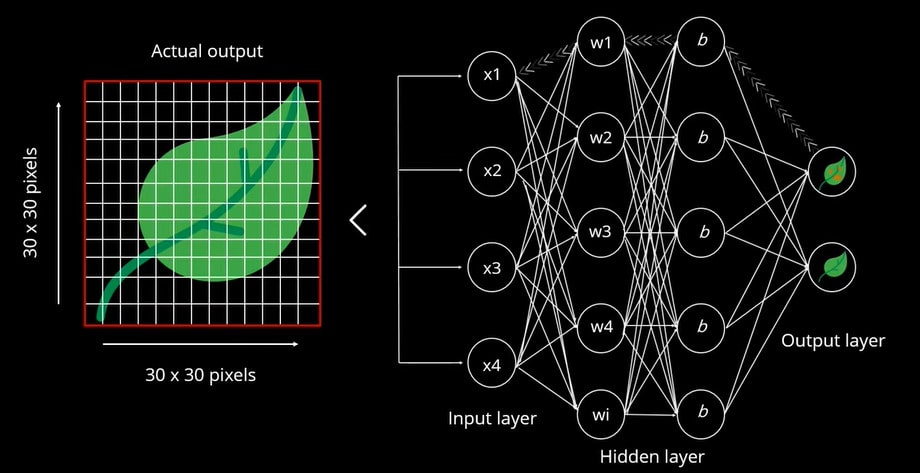

In output layer neuron with higher value determines output. Value is basically probability value. If the higher probability value predicts wrong output, the network is yet to be trained. In this case it is determined as a defective leaf so it needs to be trained, after which detection/classification can be carried out.

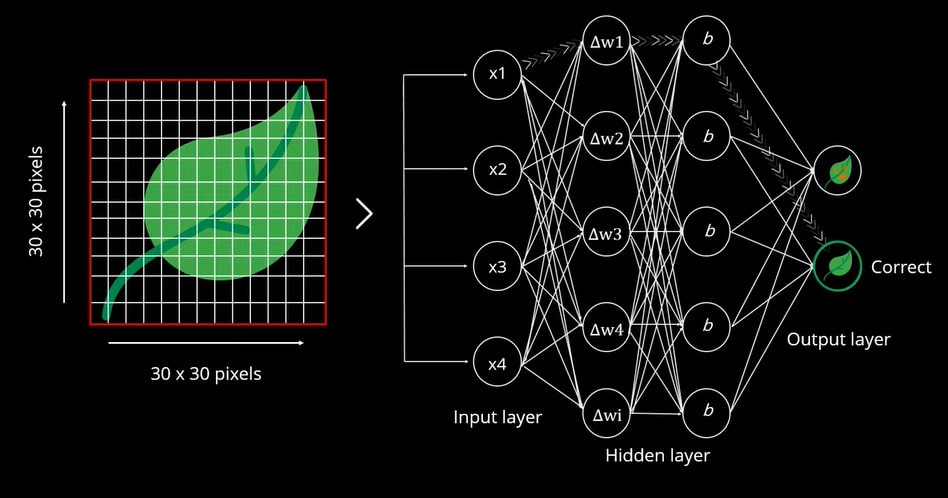

Back propagation is performed to predict the correct output, which is based on a predicted value. This is compared with the actual output. Iteratively weights are assigned until they predict the leaf correctly. Weight is then re-initialize to minimize the error.

After re-initializing the weight, based on the error difference of predicted value, we got the exact result as a normal leaf.

Some of applications of neural network

- Google translation

- Face recognition

- Self driving cars

- Object detection

- Music Composition

- Speech recognition

- Spell checking

- Character recognition

Advantages

- When the element of neural network fails, it can continue without any problem with the help of parallel nature.

- Neural networks learn and do not need to be reprogrammed.

- It can be implemented in any application.

- Neural networks perform tasks that a linear program cannot.

Disadvantages

- Neural networks need training to operate.

- Requires high processing time for larger neural networks.

- Amount of data.

Inspired by the biological neural networks, this computing system “learns” to perform various tasks by taking into consideration certain examples, usually without being programmed with rules which are task-specific.

Neural networks are a functional unit of deep learning and are inspired by the structure of the human brain. However, the more recent Artificial neural networks are functional unit of deep learning.

Don't Miss Out!